Feel the music

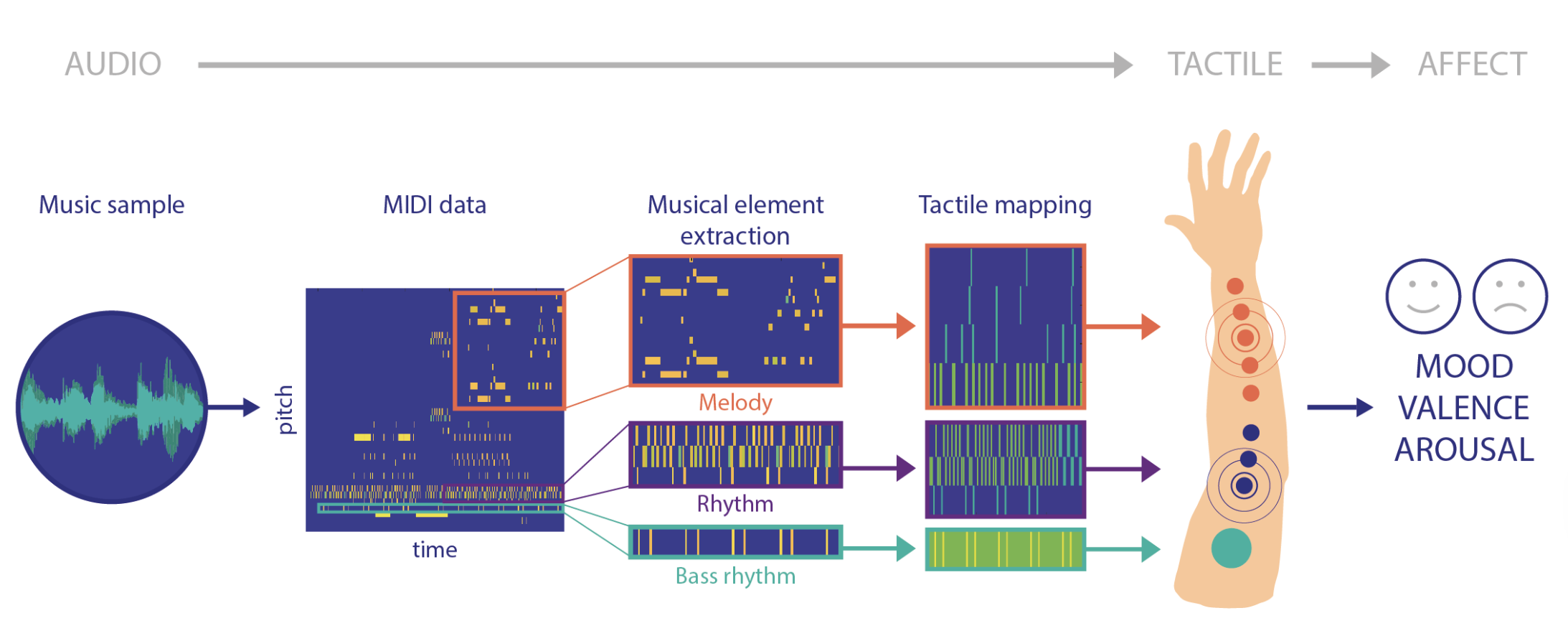

For my Master's project I explored the translation of music from the audio domain to the haptic (touch) domain. Some wearables existed that translated music into vibration sensations on the skin, but typically these did not consider mapping features of the music or investigated people's emotive response to the two modalities. I was interested in whether by mapping musical features such as melody, rhythm and volume onto sensations on the skin could convey the emotive quality of a piece of music and enrich the experience of listening to music or, ultimately, be an enjoyable and moving experience in its own right.

You can find out more about the project in the following publication:

2021: FeelMusic: Enriching Our Emotive Experience of Music through Audio-Tactile Mappings. Published in Multimodal Technologies and Interaction. Authors: Alice Haynes, Jonathan Lawry, Christopher Kent and Jonathan Rossiter.