Keeping in touch

In the first part of my PhD I was developing and testing wearable interfaces that generate haptic sensations on the skin. From delivering notifications to providing feedback in VR experiences, haptics are increasingly being used in the technologies we encounter daily. These haptic sensations are typically vibration-based as you will most likely have encountered from the buzz of a mobile phone or the juddering of a games controller. However, what about being able to generate a wider range of touch sensations; what about being able to tap, stroke, pinch or tickle? Why are technologies that play with our sense of touch so far behind their audio and visual counterparts? These were questions that motivated my research.

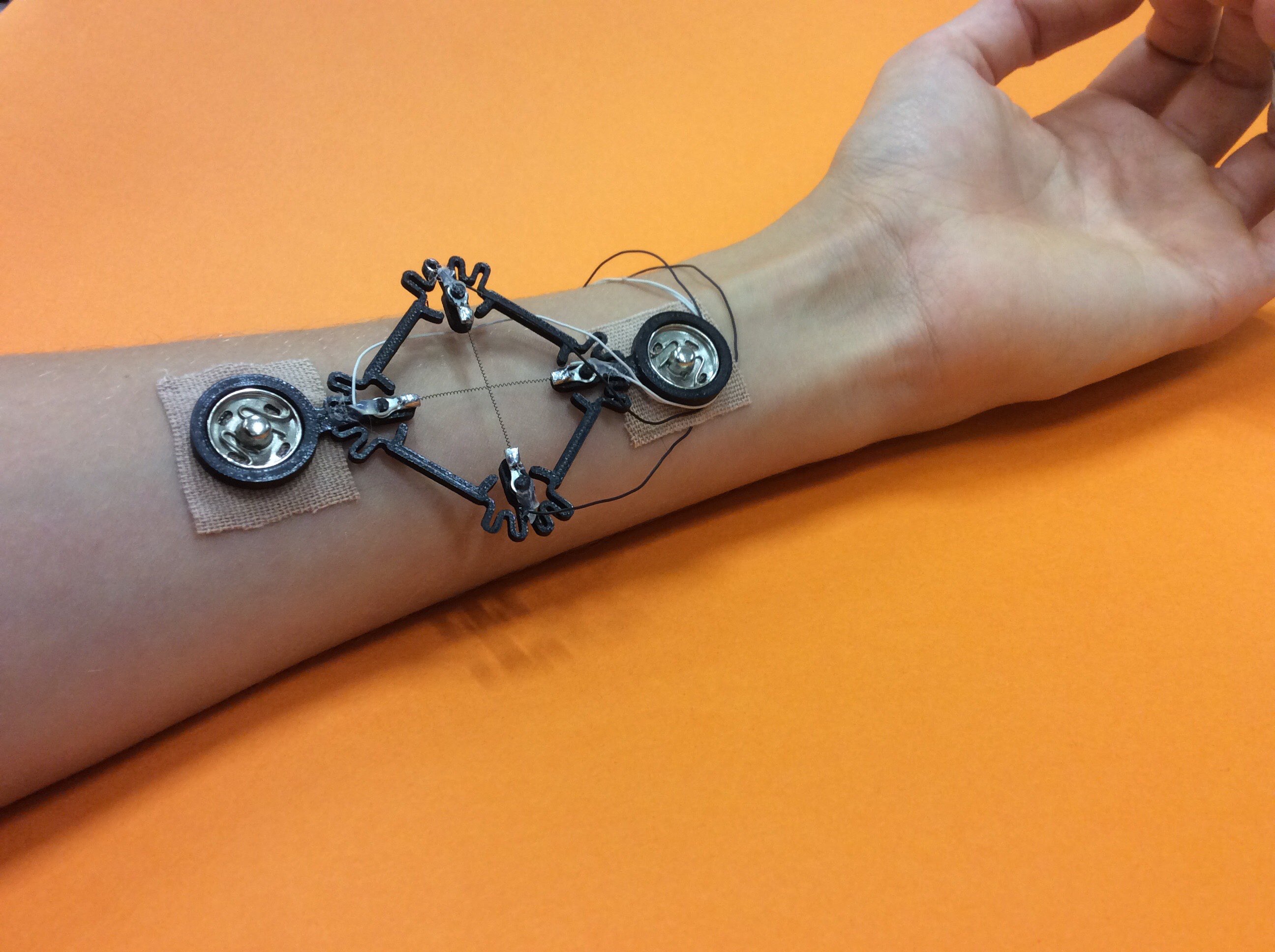

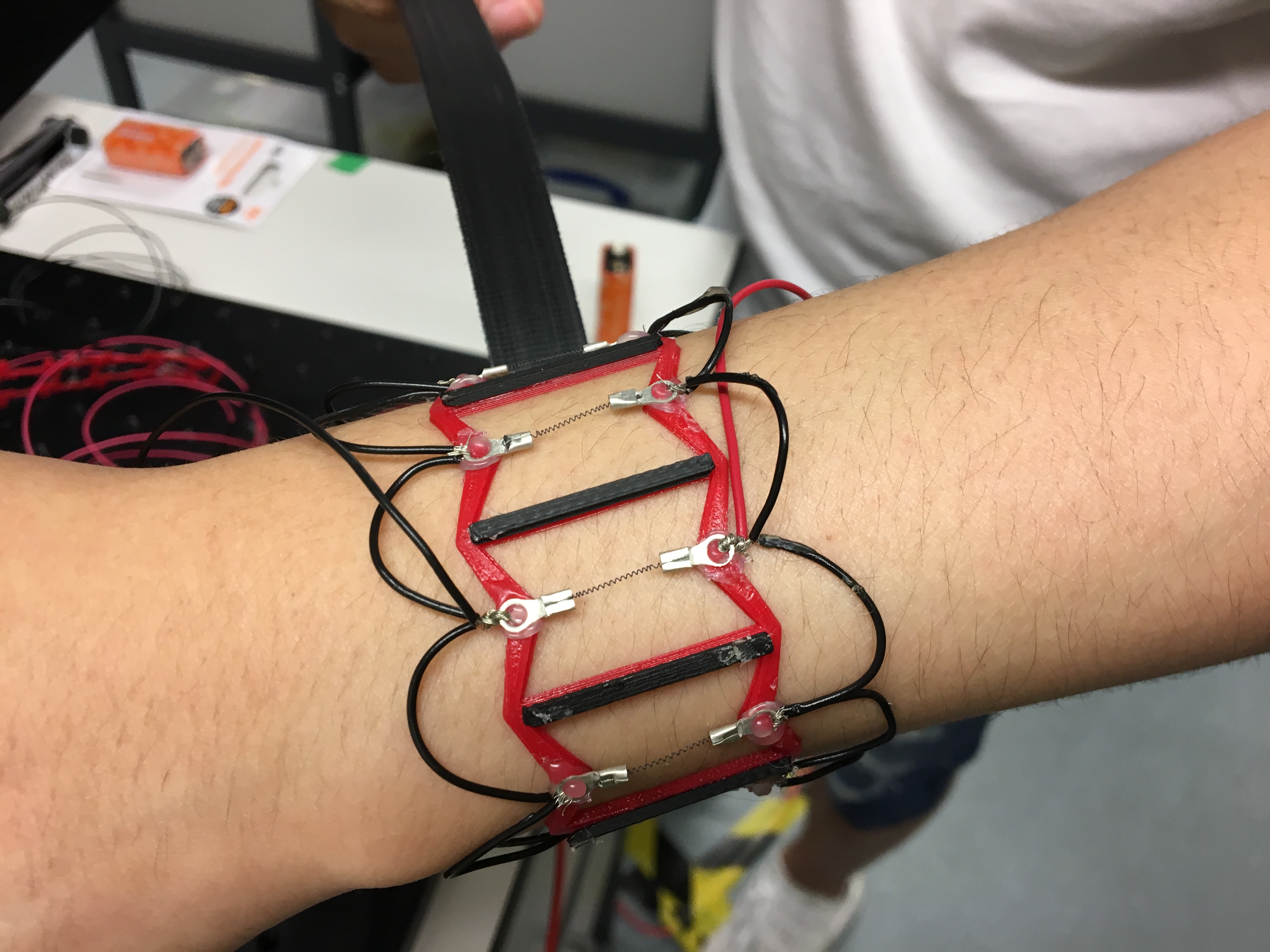

In this project we developed a series of interfaces that could generate human-inspired touch sensations such as a squeeze of the wrist or a touch on the forearm. We used shape memory alloys to generate movement since they are small, silent and easily embedded into a wearable interface. We investigated the use of these sensations as alternatives to vibration-based notifications. The interfaces generate sensations that can vary in subtlety and affective (emotive) quality, allowing for more personalised and expressive communication via touch.

You can find out about some of these interfaces in the following publications:

A Wearable Skin-Stretching Tactile Interface for Human–Robot and Human–Human Communication. Published in IEEE Robotics and Automation Letters. Authors: Alice Haynes, Melanie Simons, Tim Helps, Yuichi Nakamura, Jonathan Rossiter.

In Contact: Pinching, Squeezing and Twisting for Mediated Social Touch. Published in Extended Abstracts of the 2020 CHI Conference on Human Factors in Computing Systems. Authors: Melanie Simons, Alice Haynes, Yan Gao, Yihua Zhu and Jonathan Rossiter.